Preparing Your eSTAR 510(k) Cybersecurity Documentation

What FDA reviewers expect in eSTAR Section Q: SBOM, threat model, SPDF evidence, pen test reports, and a traceability matrix that survives RTA screening.

Read articleDeep dives on FDA expectations, threat modeling, penetration testing, SDLC, and the standards your team is being asked to meet.

Showing 12 of 286 articles · Page 1 of 24

What FDA reviewers expect in eSTAR Section Q: SBOM, threat model, SPDF evidence, pen test reports, and a traceability matrix that survives RTA screening.

Read article

Brainjacking is the unauthorized control of an implanted neurostimulator. We unpack the attack vectors, clinical consequences, and what manufacturers must build into DBS, SCS, and BCI products.

Read article

FDA clearance is the beginning of your cybersecurity obligations, not the finish line. Postmarket cybersecurity for medical devices is an active, continuous requirement that most manufacturers underestimate until a problem forces their hand. Most invest significant resources building premarket docum

Read article

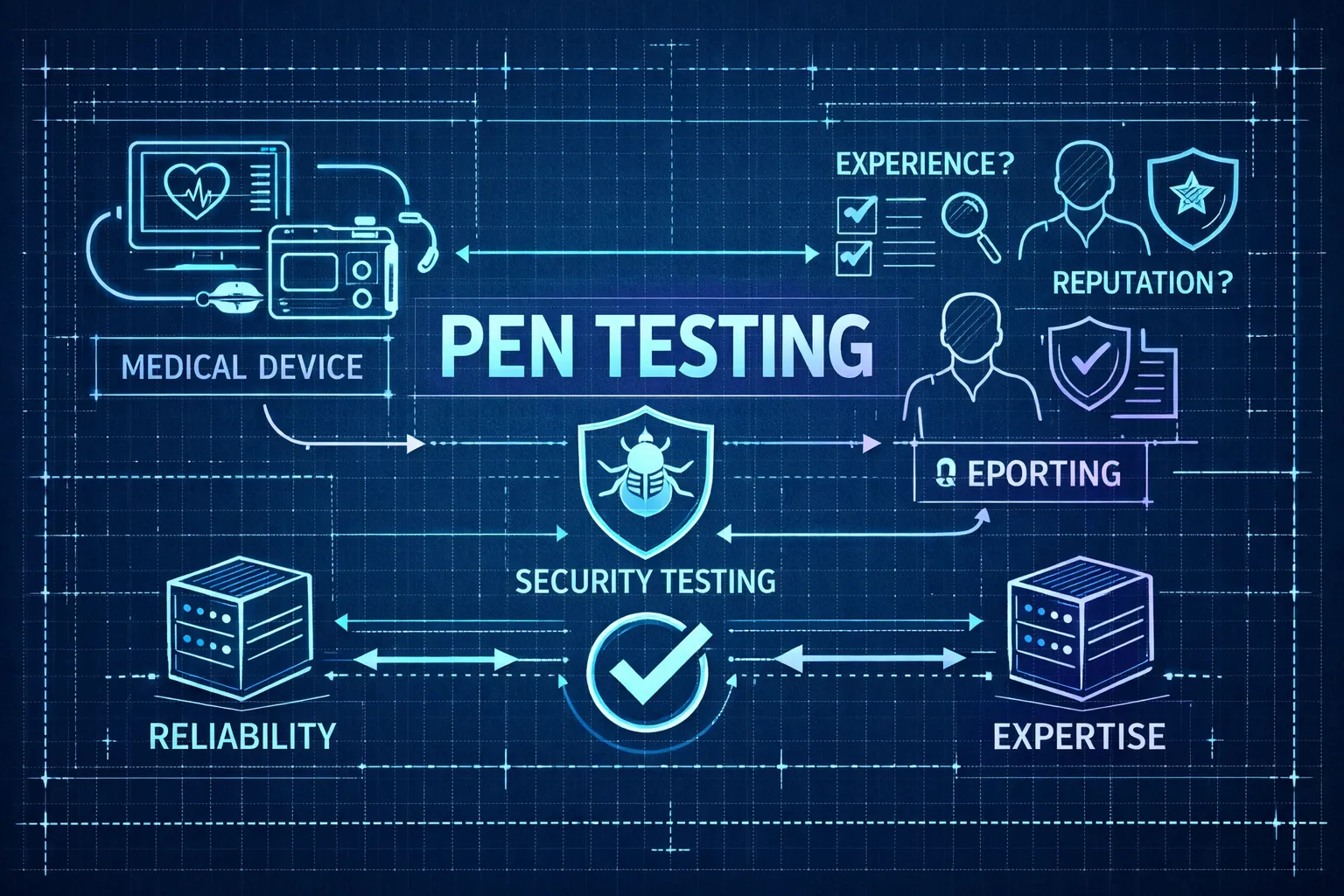

When penetration test reports are vague, incomplete, or written to enterprise IT standards rather than medical device requirements, FDA reviewers issue deficiencies that can delay clearance, sometimes requiring a full re-test before you can respond. If you're selecting a provider for medical device

Read article

Receiving an FDA cybersecurity Additional Information Request (AIR) doesn't mean your submission is dead. It means the clock is ticking, and the next move has to be precise. FDA issues these requests when reviewers find specific, documented gaps in your cybersecurity package, and they expect every g

Read article

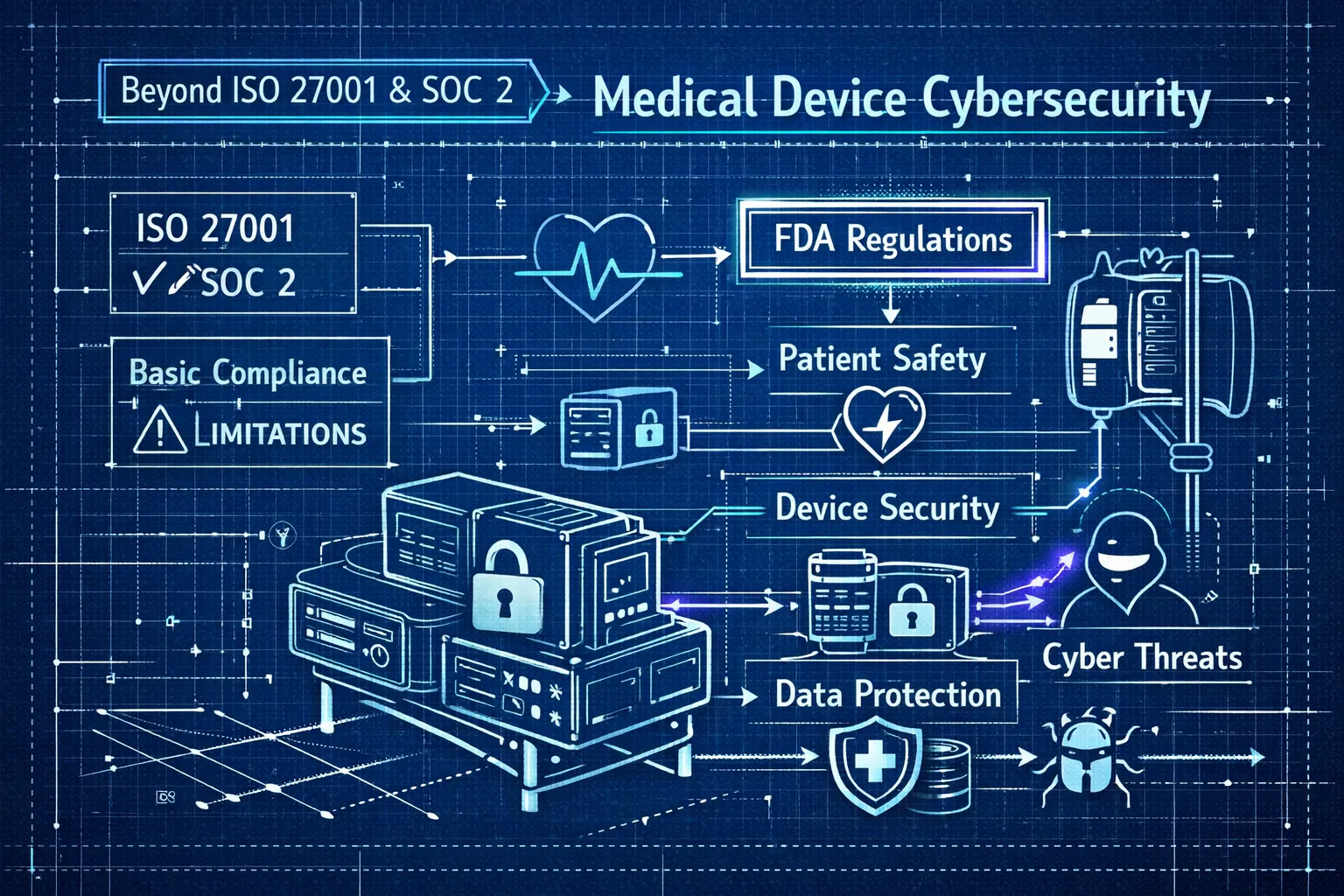

Here's a pattern Blue Goat Cyber sees regularly: a medical device manufacturer arrives at premarket submission with an ISO 27001 certificate in hand, convinced their cybersecurity story is complete. Weeks later, a deficiency letter arrives. The FDA isn't questioning their information security postur

Read article

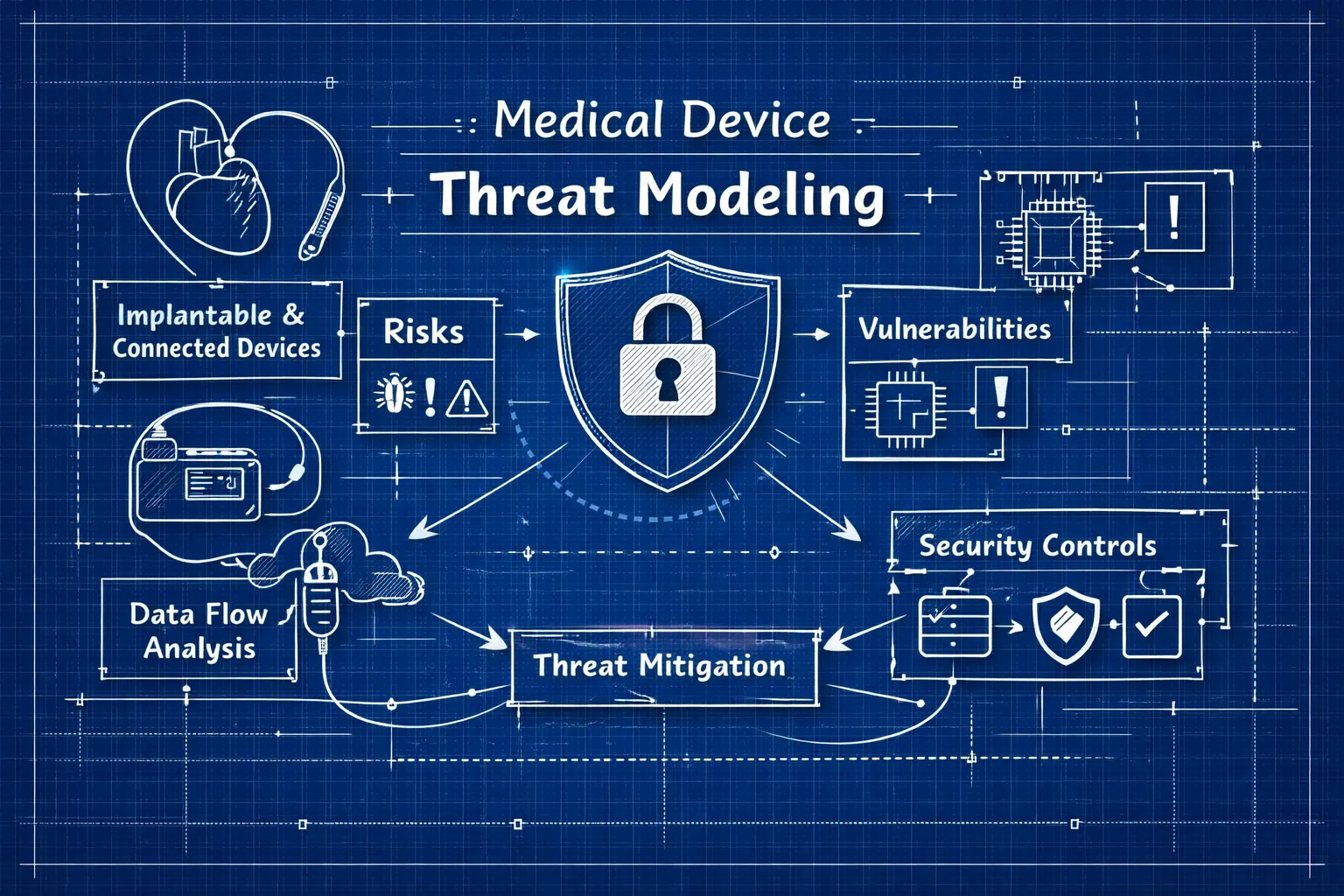

If you're asking how to conduct a cybersecurity threat model for a connected or implantable medical device, the first thing to understand is that this is not the same exercise as modeling a web application or enterprise network. The stakes are categorically different. A missed attack vector on a hos

Read article

Understanding what causes the FDA to issue a cybersecurity deficiency for medical devices starts with one uncomfortable truth: most deficiencies have nothing to do with a bad device. The device might be perfectly secure. The submission just didn't prove it. FDA reviewers work from documentation, not

Read article

Most 510(k) deficiencies don't fail on clinical data. They fail on cybersecurity. FDA reviewers are sending Additional Information (AI) requests, and outright Refuse-to-Accept (RTA) holds, at a rate that has become the primary timeline risk for connected device submissions. The documentation bar has

Read article

Device teams consistently make the same mistake with SPDF (Secure Product Development Framework) cybersecurity documentation: they treat it as a paperwork exercise and assemble artifacts at the end of development instead of generating them as engineering work happens. The result is a submission that

Read article

Performing a thorough cybersecurity risk analysis for a medical device isn't optional once your product qualifies under Section 524B of the FD&C Act. The FDA's 2026 cybersecurity guidance is direct: if your device meets the "cyber device" threshold, you must demonstrate reasonable assurance of cyber

Read article

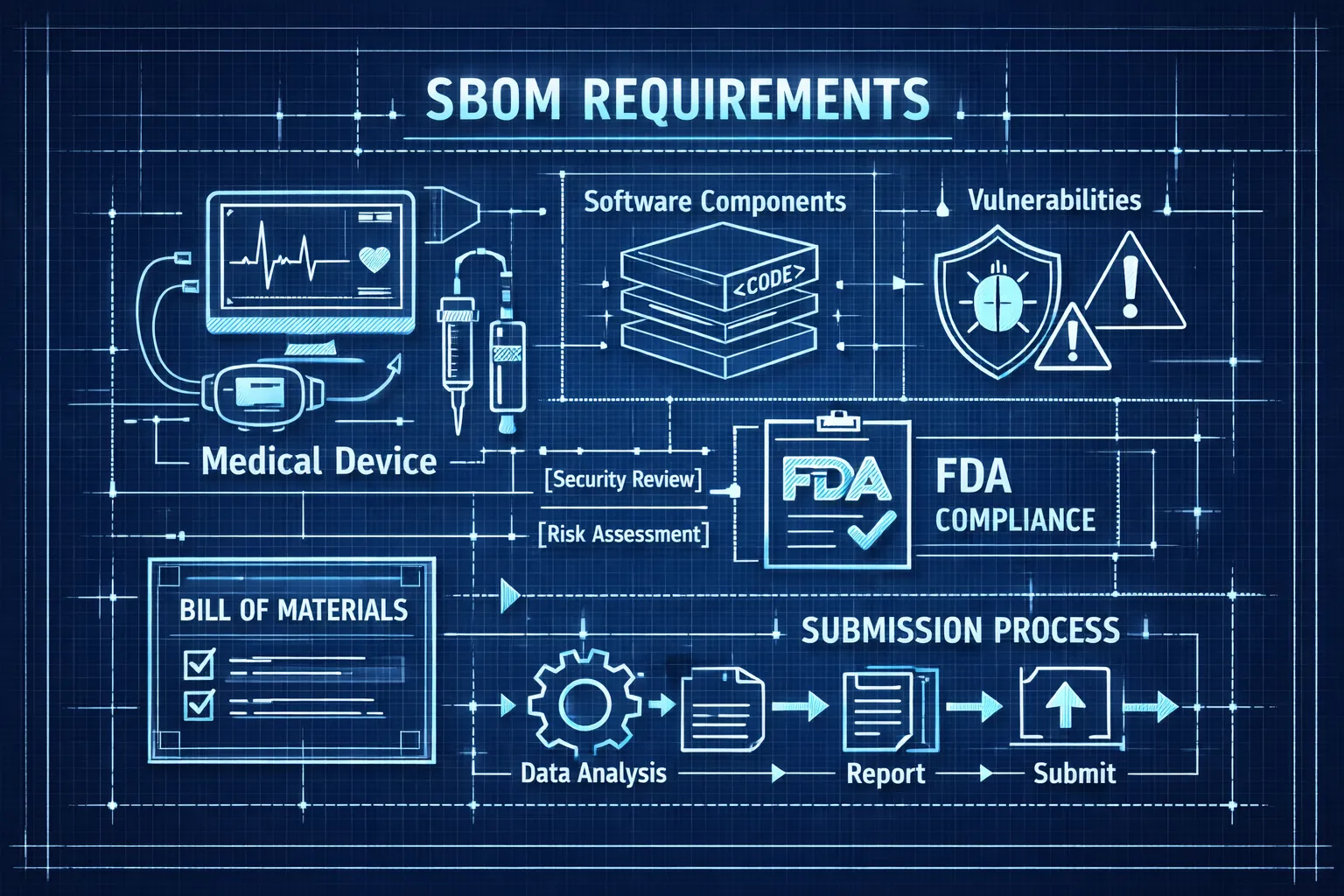

Every premarket submission for a cyber device now legally requires a software bill of materials, and missing it can get your submission refused outright. Building a compliant medical device SBOM isn't optional under Section 524B of the FD&C Act, enforced since October 2023. This isn't a recommendati

Read article30-minute strategy session. No cost, no commitment - just answers from people who've shipped 250+ submissions.